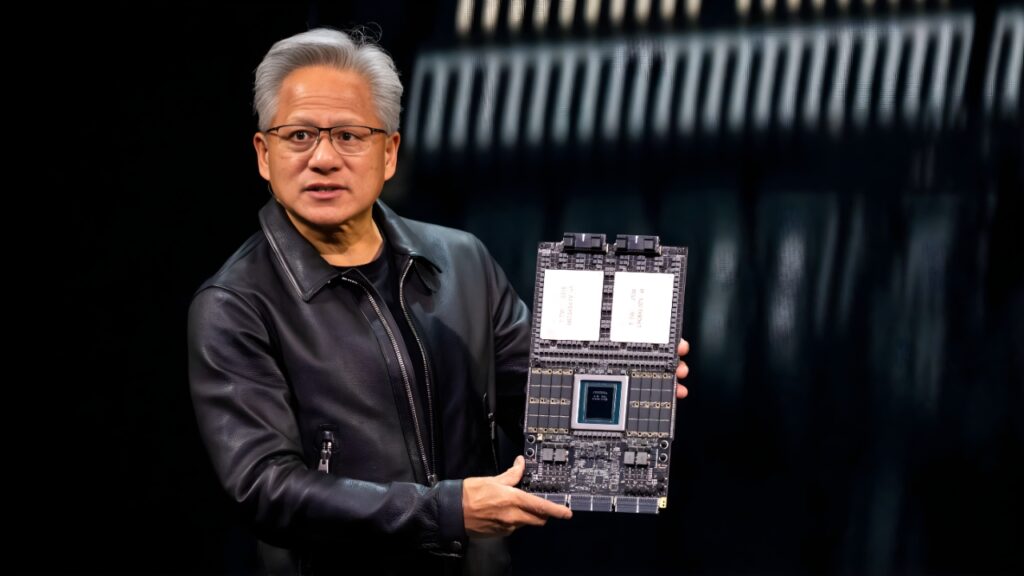

Nvidia Unveils Rubin Architecture, Signaling a New Era for AI Supercomputing

Nvidia has introduced its next-generation computing architecture, Rubin, marking a major step forward in the evolution of artificial intelligence infrastructure. Designed to power future AI supercomputers, Rubin promises substantial gains in processing speed, energy efficiency, and scalability as global demand for advanced AI workloads accelerates. The platform is aimed at supporting increasingly complex models used in generative AI, scientific research, and large-scale data analytics. Industry experts view the launch as a strategic move to maintain Nvidia’s leadership in high-performance computing, while also addressing growing concerns around power consumption and system efficiency in data centers worldwide.

A Strategic Leap in AI Computing

Nvidia’s unveiling of the Rubin architecture underscores its long-term strategy to stay ahead in the rapidly intensifying race for AI dominance. As artificial intelligence models grow larger and more computationally demanding, the need for more powerful and efficient hardware has become critical. Rubin is positioned as a foundational platform capable of handling next-generation workloads that stretch far beyond the limits of existing systems.

The architecture is expected to serve as the backbone for future AI supercomputers, supporting applications ranging from advanced language models to complex simulations in climate science, healthcare, and physics.

Performance and Efficiency at the Core

At the heart of Rubin’s design is a focus on dramatic performance improvements without a proportional increase in energy usage. Nvidia has emphasized that the architecture is engineered to deliver higher throughput while optimizing power efficiency, a growing concern for hyperscale data centers and research institutions.

By improving the balance between computational power and energy consumption, Rubin aims to reduce operational costs and environmental impact, an increasingly important consideration as AI infrastructure expands globally.

Enabling the Next Wave of AI Models

Rubin is tailored to support the next wave of large-scale AI models that require massive parallel processing and faster data movement. These models, often comprising trillions of parameters, demand hardware capable of sustaining intense workloads over extended periods.

The architecture is also designed to integrate seamlessly with advanced networking and memory technologies, enabling faster communication between processors and reducing latency across large AI clusters.

Strengthening Nvidia’s Market Leadership

The launch of Rubin reinforces Nvidia’s dominant position in the AI hardware ecosystem. As enterprises, governments, and research organizations ramp up investments in artificial intelligence, demand for cutting-edge computing platforms continues to surge. Nvidia’s ability to consistently deliver next-generation architectures has made it a central player in shaping how AI systems are built and deployed.

Analysts note that Rubin not only strengthens Nvidia’s technological edge but also deepens its strategic relationships with cloud providers and supercomputing centers.

Outlook: Shaping the Future of AI Infrastructure

With Rubin, Nvidia signals a clear vision for the future of AI supercomputing—one defined by scale, efficiency, and sustained innovation. As AI becomes more deeply embedded across industries, the architecture is expected to play a pivotal role in enabling breakthroughs that were previously computationally impractical.

The announcement highlights a broader shift in the technology landscape, where advanced hardware innovation is becoming just as critical as software in determining the pace and direction of artificial intelligence progress.